hadoop安装和libhdfs使用

- 根据Apache Hadoop官方文档操作,第一步配置好JAVA,ubuntu可以直接下载:openjdk8 devel,也可以查看本机已安装的java环境:

sudo update-alternatives --config java - 配置ssh免密登录:

sudo apt install ssh

sudo apt install pdsh

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

chmod 0600 ~/.ssh/authorized_keys

ssh localhost

- 下载Hadoop运行文件,有src和binary两种选择,src适合要对hadoop做修改的人群,binaray可以直接运行,一般下载binaray即可。3.3.4。解压并进入hadoop文件夹。

- 修改etc/hadoop-env.sh,根据自己情况修改

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export JRE_HOME=/usr/lib/jvm/java-8-openjdk-amd64/jre

export HDFS_NAMENODE_USER="root"

export HDFS_DATANODE_USER="root"

export HDFS_SECONDARYNAMENODE_USER="root"

export YARN_RESOURCEMANAGER_USER="root"

export YARN_NODEMANAGER_USER="root"

- 配置系统环境变量/etc/profile(全局修改),/bash/.bashrc(当前用户更改),我选择全局更改,有如下:

export PDSH_RCMD_TYPE=ssh

export HADOOP_HOME=/home/***/下载/hadoop-3.3.4

export CLASSPATH=$($HADOOP_HOME/bin/hadoop classpath --glob):$CLASSPATH

export LD_LIBRARY_PATH=$HADOOP_HOME/lib/native:$LD_LIBRARY_PATH:$JAVA_HOME/jre/lib/amd64/server

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export JRE_HOME=/usr/lib/jvm/java-8-openjdk-amd64/jre

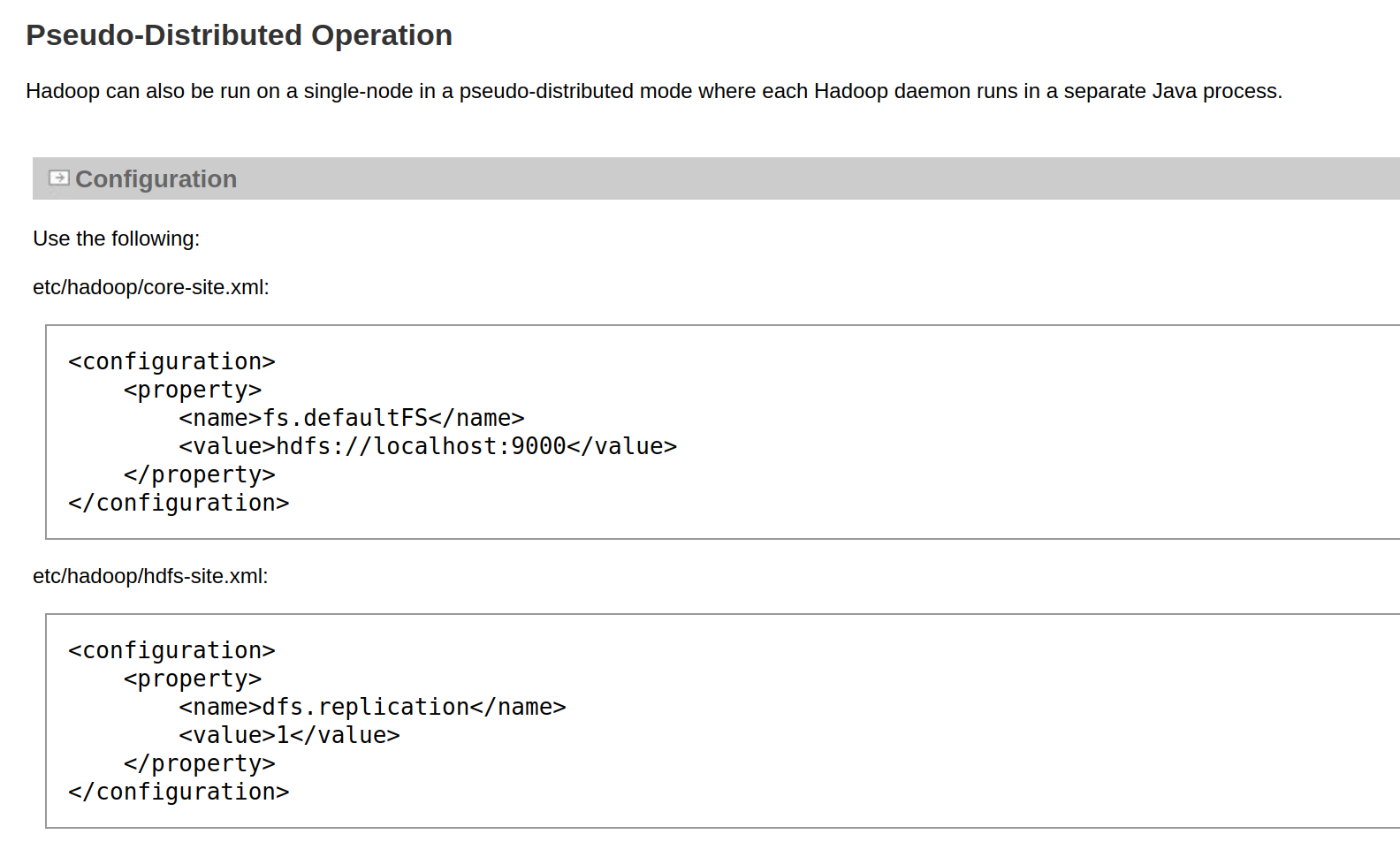

- 启动并初始化单节点伪集群

#初始格式化

bin/hdfs namenode -format

#启动集群

sbin/start-dfs.sh

#关闭集群

sbin/stop-dfs.sh

- 尝试编译hdfs c++例子:

g++ -o hdfs hdfs.cpp -L $HADOOP_HOME/lib/native -lhdfs -I $HADOOP_HOME/include -L $JAVA_HOME/jre/lib/amd64/server/ -ljvm

#include "hdfs.h"

#include "stdio.h"

//#hdfs.cpp

int main(int argc, char **argv)

{

hdfsFS fs = hdfsConnect("localhost", 9000);

if (!fs) {

fprintf(stderr, "connect fail\n");

return -1;

}

hdfsFile writeFile = hdfsOpenFile(fs, "/first.txt", O_WRONLY, 4096, 0, 0);

if (!writeFile) {

fprintf(stderr, "openfile fali\n");

return -1;

}

hdfsWrite(fs, writeFile, "hello hdfs", 10);

hdfsCloseFile(fs, writeFile);

hdfsDisconnect(fs);

return 0;

}

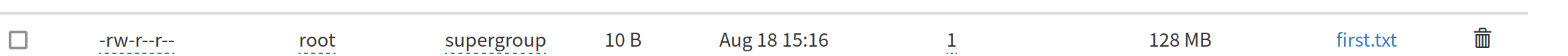

结果入图:

hadoop安装和libhdfs使用

https://blog.427221.xyz/archives/hadoop-an-zhuang-he-libhdfs-shi-yong